Remember when the DALL·E AI image generator newly became accessible to all back in 2021?

The very next year, Forbes estimated that over 1.5 million users were creating two million images per day using DALL·E.

Chances are, if you’ve dabbled with AI-generated art, DALL·E was your first stop, too.

But those early days of using AI only for fun are long gone. Today, AI-generated images are being used for business purposes.

A March 2023 study found that 36% of marketers are now using AI to create website visuals, while 39% use it for social media content.

Yet, while many embrace AI’s creative potential, few truly understand how does AI image generation work behind the scenes.

How does an AI model go from analyzing millions of images to producing a brand-new, never-before-seen visual based on a simple text prompt?

That’s exactly what I’ll walk you through in this guide. We’ll cover what is AI image generation, how does it work, what AI models are behind the scenes, and more.

So let’s start.

What Is AI Image Generation?

AI image generation is the process of using artificial intelligence models to create visuals from scratch.

You just give a few lines of text to an AI image generator, and an algorithm that’s been trained on an absurdly large dataset of images comes up with an image in seconds. If you’ve ever wondered how to generate AI images, the process is surprisingly simple for the user: type a text prompt, and the model translates your words into a brand-new visual.

The process involves no paintbrushes or cameras.

Never Worry About AI Detecting Your Texts Again. Undetectable AI Can Help You:

- Make your AI assisted writing appear human-like.

- Bypass all major AI detection tools with just one click.

- Use AI safely and confidently in school and work.

The algo has been trained on tons of paintings, photos, and digital artworks from all fields of life in existence and can now produce something completely new based on your instructions.

By completely new I mean just anything a human mind can think of, whether real or unreal, existing or inexisting.

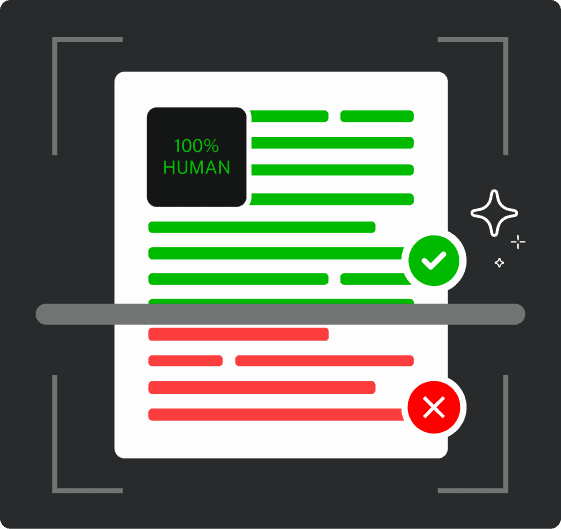

For example, ask for “a cyberpunk city at sunset,” and AI will create a never-before-seen image that matches your description.

And no, the AI won’t be pulling from a pre-existing photograph or copying another artwork. It generates something entirely unique each time.

But how do the images turn out to be?

Well, the images are sometimes stunning. Sometimes hilariously off. (Ever asked an AI to generate human hands? Good luck.)

Complex scenes with precise interactions between objects can sometimes confuse the AI, leading to visual glitches that look like they belong in an alternate reality.

However, newer models have shown great improvement at drawing hands, feet, and other intricate details.

Some major AI image generators include:

- DALL·E

- Stable Diffusion

- MidJourney

- Craiyon

Each of these has its own strengths. Some are good at photorealism, while others are better at stylized art.

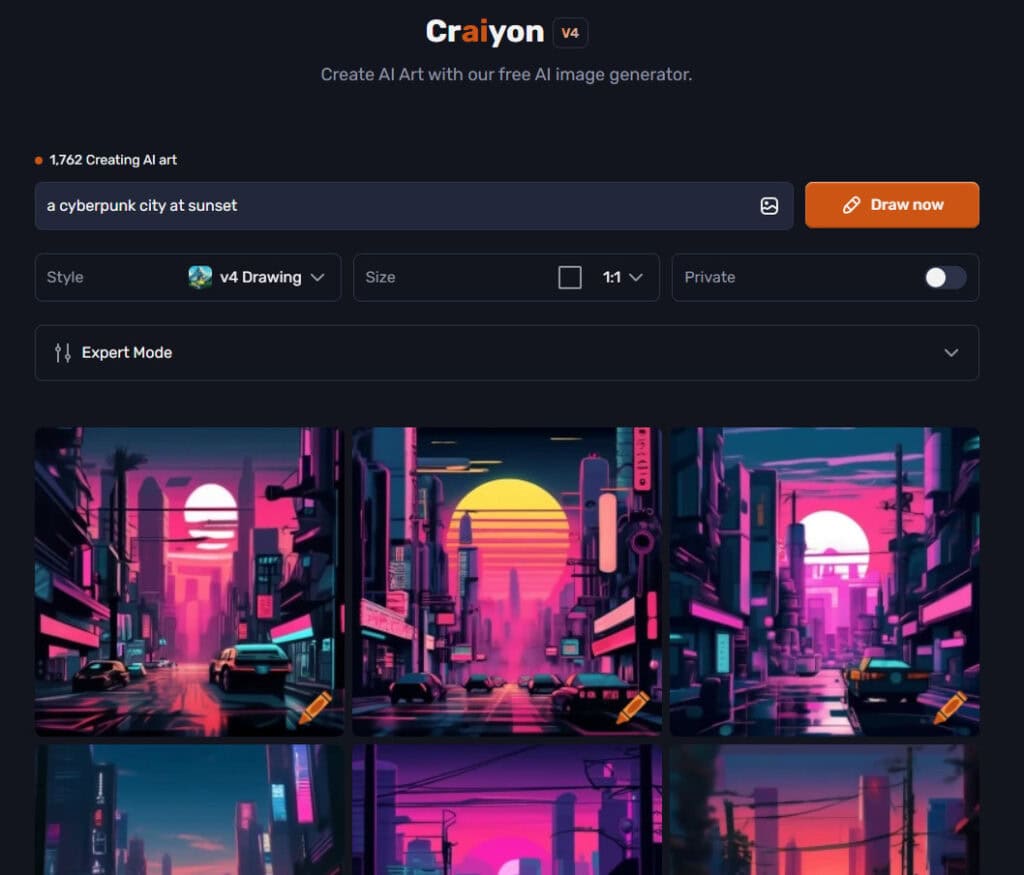

Take a look at this pixel art image by Stable Diffusion:

So, how does AI actually do this on a technical level? Let’s further break down how does AI image generation work.

How AI Uses Machine Learning to Create Images

The main player behind AI image generation is machine learning, or ML in short.

Machine learning is a complex computer framework that allows algorithms to learn patterns, recognize relationships, and generate new data without much intervention from humans.

Thanks to their training on massive datasets, ML models learn what objects, colors, and textures should look like all by themselves.

Now, there are two main techniques for training these models:

- Supervised learning: The AI is shown images along with their descriptions, helping it associate words with visual elements.

- Unsupervised learning: The AI learns by analyzing patterns in massive datasets without human-labeled instructions, making sense of visual information on its own.

On a more technical level, neural networks are the underlying technology here.

These are computer models that mimic the human brain and process information in layers, somewhat like humans.

Of course, this is just the beginning.

Next, you’ll learn the step-by-step process of how does image generation AI work actually.

How AI Image Generation Works (Step-by-Step)

While we’ve covered the broad strokes, how does AI image generation work in practice?

The actual process isn’t as simple as pressing a button and watching magic happen. Behind every AI-generated image is a carefully structured pipeline.

Here’s an eagle-eye view of that pipeline.

1. Training on Massive Image Datasets

Before an AI model can generate images, it first needs to see a lot. And by a lot, I mean millions (or even billions) of images, often scraped from the internet.

These images are paired with textual descriptions that help the AI understand how words relate to visual elements.

When it sees “a fluffy golden retriever lying in the sun,” it learns that “fluffy” refers to texture, “golden” refers to color, and “lying in the sun” affects lighting and shadows.

This phase is of critical importance because an AI model is only as good as its training data.

If the dataset is unbalanced, say, mostly Western-style art or biased depictions of certain professions, the AI’s outputs will reflect those biases.

This is why researchers constantly fine-tune datasets manually for diversity and fairness so as to prevent mishaps like AI-generated CEOs tending to be middle-aged white men by default.

2. Using Neural Networks to Recognize Features

Once the AI has ingested a mountain of images, it begins processing patterns using neural networks.

Since memorizing specific images isn’t practical and would be painfully limiting, the AI breaks them down into numerical values, spotting trends and assigning probabilities to relationships.

For example, it learns that guitars are usually associated with hands, that cats tend to have whiskers, and that sunlight casts soft shadows.

If you were to ask the AI for “a flamingo wearing a top hat and sunglasses, dancing on a beach at sunset, rendered in a watercolor painting style,” it won’t find an existing image to copy.

Instead, it will generate an original image by piecing together concepts it has learned (flamingo, top hat, sunglasses, beach, sunset, and watercolor style).

3. Generating Images Using AI Models

At this stage, the AI is ready to create images, but it doesn’t just paint them stroke by stroke like a human artist.

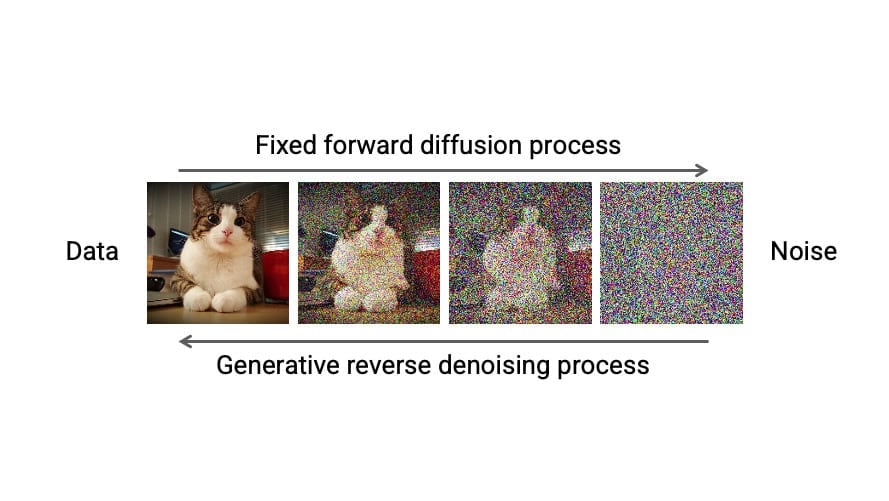

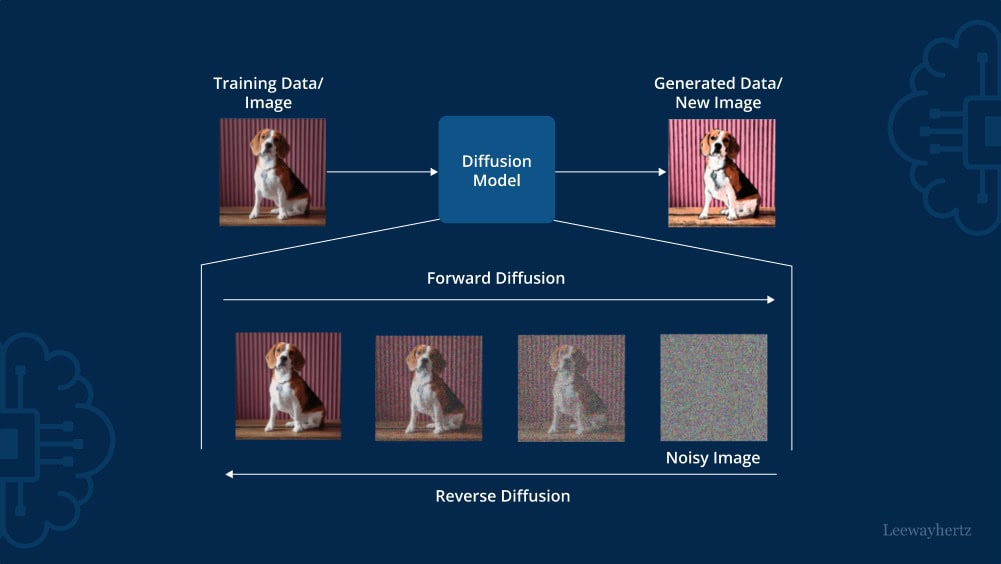

Instead, many models use a process called diffusion which is a technique where the AI learns to “recover” images from visual noise.

Here’s how it works:

- Researchers add layers of random noise (e.g. static on an old TV screen) to images during training.

- The AI learns to recognize the obscured images beneath the noise.

- It then reverses the process, gradually removing noise until it recovers a clear, detailed image.

Over time, the AI gets so good at this process that it no longer needs an original image at all.

Instead, when you enter a text prompt, the AI starts with pure noise and refines it pixel by pixel until an entirely new image emerges.

4. Refining Outputs Through Iterative Training

While AI-generated images can be jaw-droppingly realistic, the process isn’t perfect.

Sometimes, a model generates an image that looks almost right, but then you notice a bizarre extra limb or a melted-looking face. That’s where AI models need iterative training.

AI models improve through a feedback loop where they constantly compare their generated images against real ones.

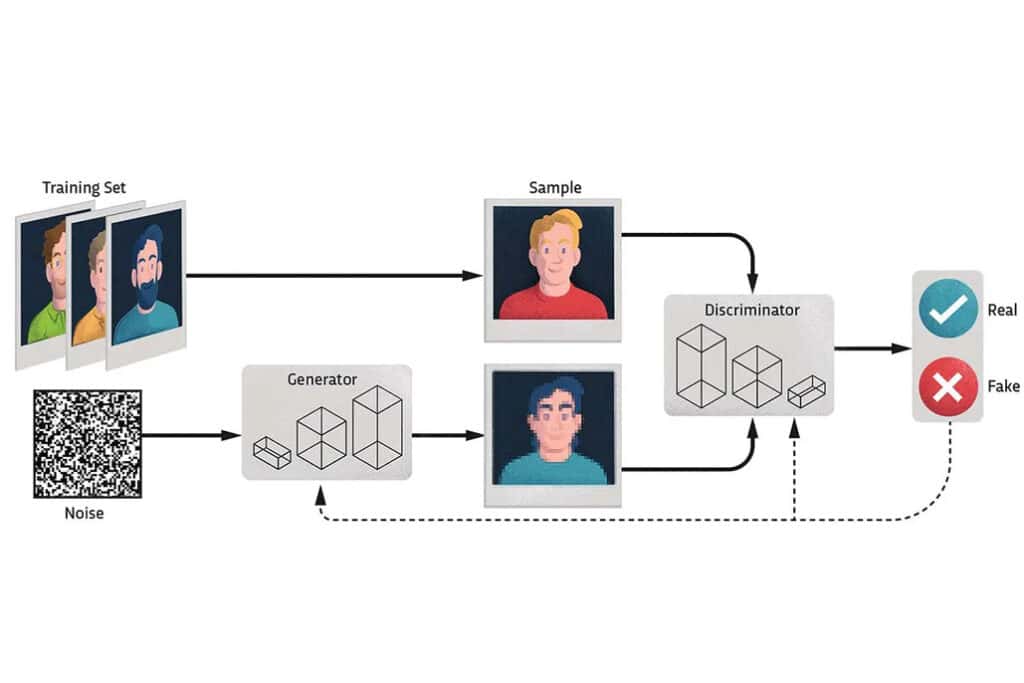

This is often done using two competing networks:

- A generator, which creates new images

- A discriminator, which tries to tell if those images are real or fake

The generator gets better at fooling the discriminator, and the discriminator gets better at spotting fakes.

This never-ending game pushes the AI to improve until the generated images become nearly indistinguishable from real ones.

With each iteration, AI models get smarter, faster, and better at understanding subtle details such as how reflections work on water, how different materials interact with light, and, yes, how to finally generate human hands that don’t look like they belong to an eldritch horror.

Types of AI Image Generation Models

Under the hood, AI image generators use different types of models to bring pixels to life.

Following are a few main types of those models.

1. Generative Adversarial Networks (GANs)

As mentioned earlier, GANs consist of two neural networks—a generator and a discriminator—that compete against each other. The generator creates images while the discriminator evaluates their authenticity.

Over time, the generator improves its ability to produce realistic images that can fool the discriminator. GANs are widely used for creating high-quality, photorealistic images.

2. Diffusion Models

Diffusion models generate images by gradually adding noise to data and then learning to reverse the process.

Starting from random noise, the model refines the image step-by-step, guided by a text prompt.

This approach is known for producing highly detailed and diverse outputs.

3. Variational Autoencoders (VAEs)

VAEs encode images into a compressed latent space and then decode them back into images. By sampling from this latent space, VAEs can generate new images that resemble the training data.

They are often used for tasks requiring controlled and structured image generation.

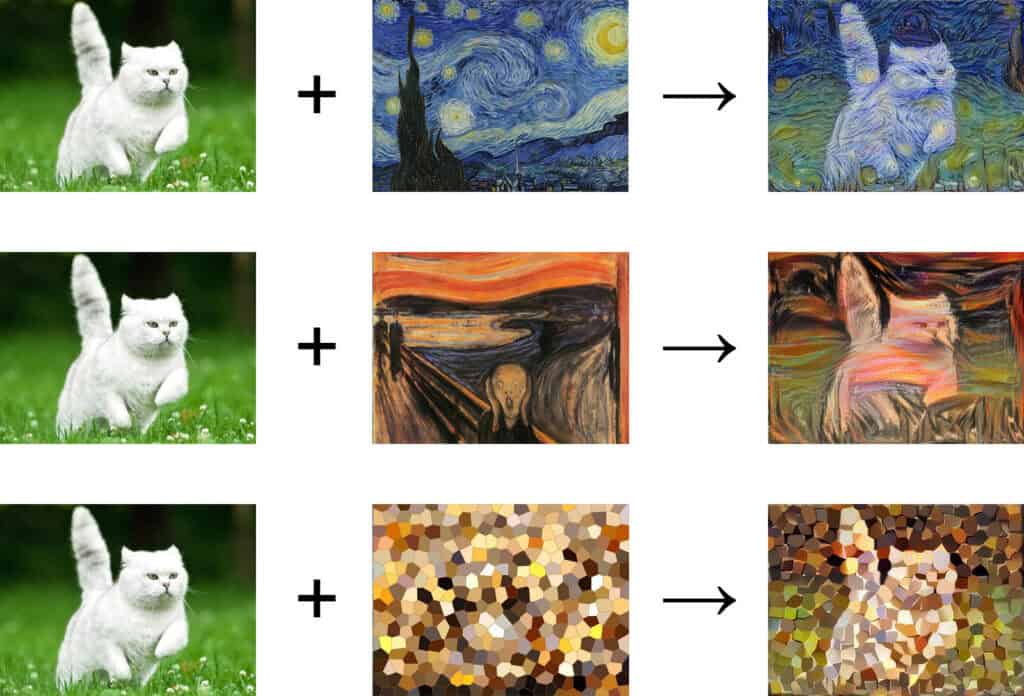

4. Neural Style Transfer (NST)

Ever wanted to see your pet’s portrait in Van Gogh’s Starry Night style? That’ll need NST’s expertise.

NST takes two existing images, one for content and one for style, and blends them.

It uses deep neural networks to isolate and blend features like textures, colors, and patterns, creating visually striking outputs that mimic the style of famous artworks or unique designs.

Applications of AI Image Generation

What once required hours of manual design work can now be achieved in minutes with the right AI content creation tools.

Here are some of the most impactful ways AI image generation is being used today:

- Advertising creatives: Brands use AI image generators to create advertising graphics, product renders, and campaign visuals at a fraction of the cost and time of traditional design methods.

- Art: Artists and designers use AI to generate new styles, remix existing aesthetics, and explore visual concepts they might not have imagined on their own.

- Blog and social media thumbnails and images: With AI, bloggers no longer have to hunt for stock photos or rely on generic graphics. They can simply generate custom images that match their content’s theme.

- Game development and virtual worlds: Video game developers are using AI to generate detailed textures, character designs, and, sometimes, entire landscapes.

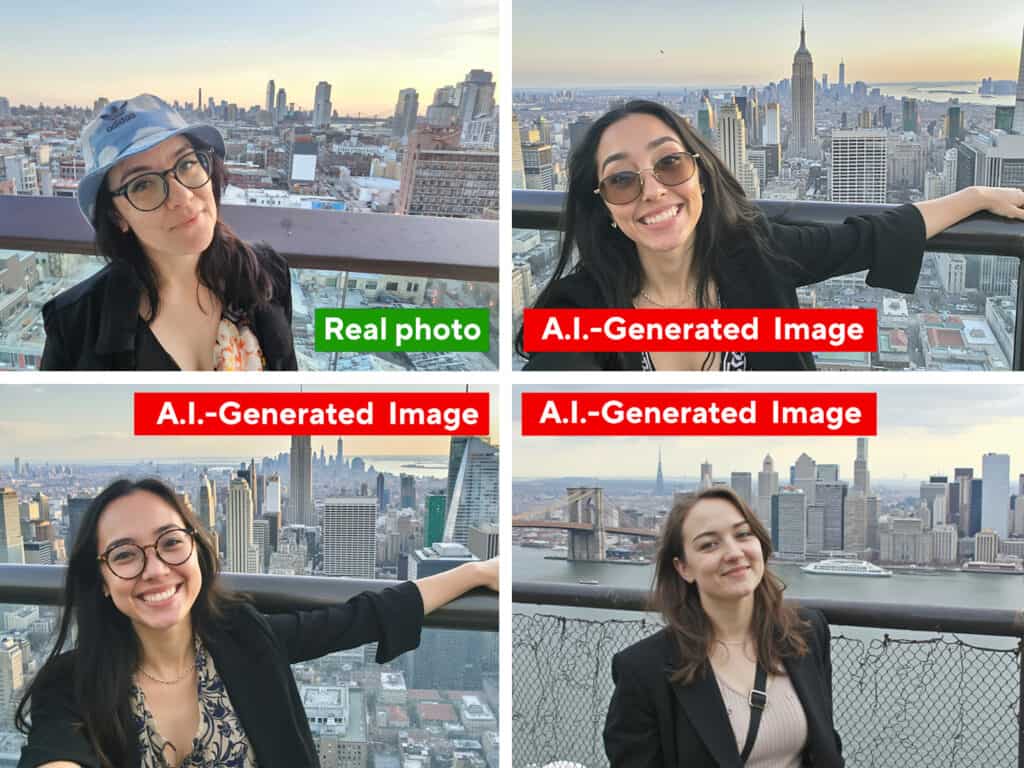

How to Verify If an Image Was AI-Generated

Spotting the difference between human-made and AI-created visuals is getting trickier as AI is generating more realistic images by the day.

However, there are a few manual techniques to verify whether an image was AI-generated.

Look for Unnatural Details

AI isn’t perfect, and sometimes, small but telling errors give it away.

Keep an eye on oddly shaped fingers, unnatural facial expressions, inconsistent lighting, or asymmetrical patterns that don’t align with real-world physics.

Even advanced AI models sometimes struggle with rendering realistic hands, eyes, or complex textures.

Check for Overly Smooth or Blurry Areas

AI-generated images often have an uncanny softness to them, especially in regions with high detail.

If an image appears too smooth, lacks fine texture, or has blurred edges where sharpness should exist, it could be the result of AI generation.

Analyze Shadows and Reflections

One of AI’s weak spots is accurately replicating the way light interacts with objects.

Reflections in mirrors or windows might not match the actual scene, and shadows can appear inconsistent or physically impossible.

If something about the lighting seems “off,” it’s worth investigating further.

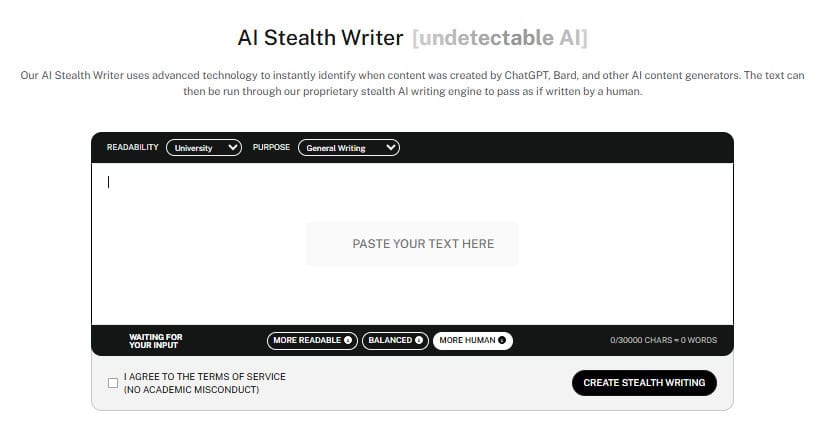

AI Stealth Writer: The Bridge to Authentic Content

Just as you look for lighting “hacks” to verify an image, AI text detectors look for “burstiness” and “perplexity” to flag machine-written copy.

To bridge the gap between a raw draft and a professional piece, many creators turn to our Undetectable AI’s AI Stealth Writer.

Use Reverse Image Search

If you suspect an image might be AI-generated, try running a reverse image search.

You can use Google image search feature for this purpose.

AI-generated images often don’t have an origin on the web, unlike stock photos or user-generated content.

If an image doesn’t show up in search results, it might be AI-created.

Zoom In and Inspect the Fine Details

At a quick glance, AI images can look flawless.

But when zoomed in, strange artifacts, repeating textures, or distortions in small details (like the pattern of hair or fabric) might become noticeable.

Despite all these manual methods, there are many finer details that the human eye simply cannot catch.

But AI image detectors available to us now, we don’t need to bother ourselves with manually detecting images for AI.

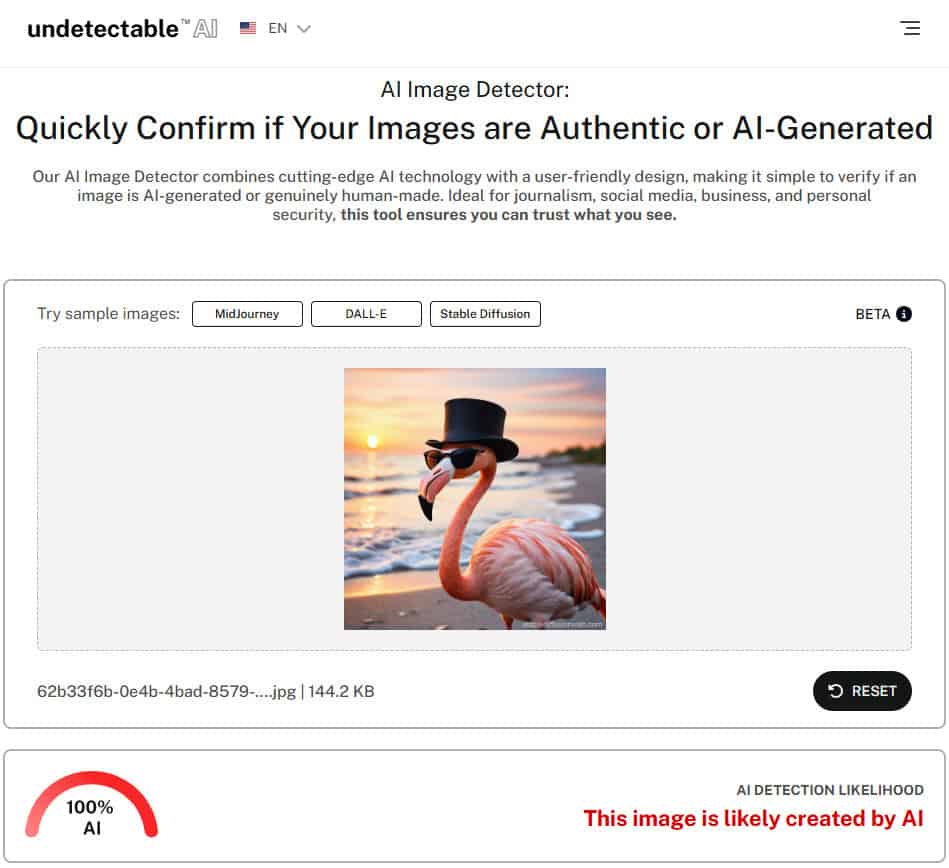

Take Undetectable AI’s AI Image Detector, for example.

You simply need to upload the picture and the detector, using machine learning algorithms, analyzes the image at a deeper level to detect AI fingerprints that may not be visible to the naked eye.

Remember the Flamingo Hat image generated by Stable Diffusion AI from a few sections back?

It couldn’t fool Undetectable AI. See for yourself below.

So, if you’re unsure whether an image is AI or not, use Undetectable AI’s AI image detector to get the answer.

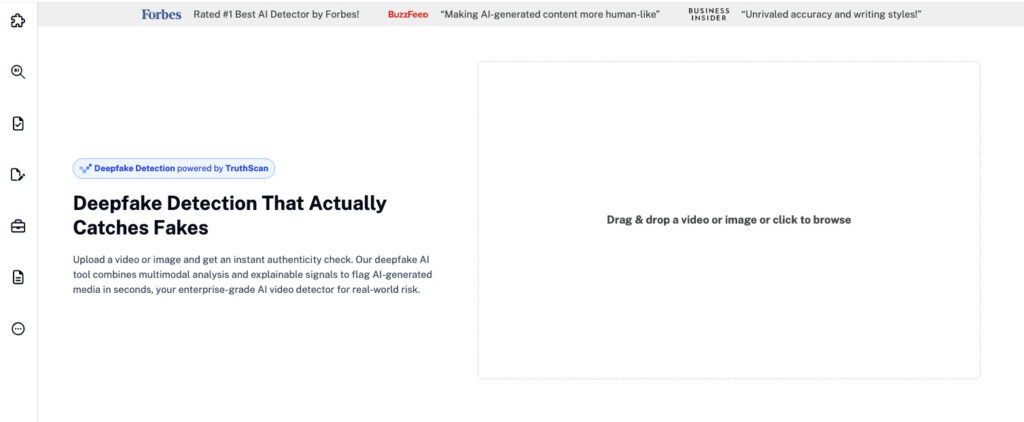

Also, try using TruthScan’s AI Image Detector.

Simply upload your image, and it will automatically analyze every pixel, texture, and metadata layer to uncover subtle signs of AI manipulation that even professionals might overlook.

It’s a quick, reliable way to verify whether a visual was genuinely captured or crafted by AI before you trust or share it.

Detecting Subtle AI Markers with Deepfake Detection

For visuals that look almost too real, Undetectable AI’s Deepfake Detection takes image verification to the next level.

It identifies the subtle inconsistencies that reveal synthetic visuals like irregular lighting, unnatural facial blending, or pixel-level distortions invisible to the naked eye.

Simply upload your image or video to Deepfake Detection to receive a clear authenticity score with visual heatmaps showing where manipulation might have occurred.

By pairing Deepfake Detection with the AI Image Detector, you can verify both surface-level and deep structural cues, ensuring complete confidence in your visuals’ authenticity.

Final Thoughts

AI image generation is no longer a futuristic concept.

It’s here, it’s evolving, and it’s becoming a fundamental part of digital content creation.

So understanding how does AI image generation work gives you a crucial edge in today’s atmosphere, whether it’s the job market or personal circle.

At the same time, having the ability to tell apart AI generated images is equally important due to its growing use for making deepfakes.

This ability will also help you spot AI clues in your images so you can remove them to bypass AI content detection.

But with Undetectable AI’s AI image detector, that is entirely our headache.

Using advanced machine learning algorithms, our detector can identify AI-generated images with precision.

Don’t take our word for it when you can test it out yourself.

While you’re here, don’t forget to explore our AI Detector and Humanizer in the widget below!