You’ve probably seen more AI-generated photos online than you realize.

Sometimes it’s obvious that a picture was generated by AI, but it’s getting harder to tell as generative image and video tools improve. New tools like Google’s Nano Banana Pro, and updates to OpenAI’s ChatGPT image model let users quickly generate synthetic images that mirror real ones. Previous research found that 85% of Americans say deepfakes are eroding trust online.

Multiple tools claim to detect AI-generated images, but do they work?

I ran 50 detection checks across five of the most popular AI-image detectors and documented the results. Not only will I present all the data in this article and explain it, I’ll also link to the documentation at the end.

The five detectors I used for these tests were: TruthScan, AI or Not, Sight Engine, WasItAI, and Winston AI. I tested these tools by having each analyze two ChatGPT-generated images, six Nano Banana-generated images, and two images generated by Midjourney. I used multiple prompting styles and techniques (which I’ll go into detail about later when I break down each analyzed image).

All five detectors were tasked with spotting AI-created images across categories such as fraud, disinformation, general photography, and deepfakes. Unsurprisingly, not all of the detectors performed well. TruthScan was the only detector to consistently classify all of the content I submitted at 97% or higher.

Never Worry About AI Detecting Your Texts Again. Undetectable AI Can Help You:

- Make your AI assisted writing appear human-like.

- Bypass all major AI detection tools with just one click.

- Use AI safely and confidently in school and work.

🔍 AI Image Detector Test Results

| Detection Tool | Total Tests | Correctly Detected | Missed (Fails) | Accuracy |

|---|---|---|---|---|

| TruthScan | 10 | 10 | 0 | 100% |

| AI or Not | 10 | 8 | 2* | 80% |

| Sight Engine | 10 | 7 | 3 | 70% |

| WasItAI | 10 | 6 | 4 | 60% |

| Winston AI | 10 | 3 | 7 | 30% |

* AI or Not labeled one AI generation as 78%, one as 85%, and one as 89%. Since the test requires at least 90%, we count the first two as fails but treat the 89% as a near-miss, hence the asterisk..

AI or Not also performed pretty well. In fact, while AI or Not classified multiple AI-generated items below 90% certainty, it was the second-most accurate and consistent detector during my tests, behind TruthScan. Even where AI or Not failed, those failures weren’t catastrophic, but there’s still room for improvement.

The rest of the AI image detectors I tried performed far worse. For example, Sight Engine misclassified 3 fraud-related AI-images as authentic.

Now I’ll show you each image (out of the 10 I generated) explain how I created it, and show how each model scored it.

#1. “Man On a Ledge” (generated by ChatGPT)

How The Image Detectors Scored It

| Image Model | Category | TruthScan | Sight Engine | AI or Not | Winston AI | WasItAI |

|---|---|---|---|---|---|---|

| ChatGPT Full Generation | General | 99.00% AI | 99.00% AI | 77.99% AI | 0.98% AI | 1.00% AI |

To me (and I see a lot of AI-generated content daily), the image looks pretty convincing at first glance. If you clicked on someone’s social media profile, scrolled through their feed, and saw this image, would it immediately stand out as an AI-generated fake? To be fair, I tried to get creative with the prompt. Here’s the one I used to generate this image:

“Generate me a snapshot camera style photo of a man standing on top of a roof looking down on the street, nightime, he’s illuminated by the camera flash, theres light traces from the cars below, a woman stands behind him with her hand on her lips, smiling, the aesthetic of the photo is like an a photo taken on a snapshot camera in 2009 by college friends messing around.“

Regardless of how creative I got or how many details I included in my prompt, TruthScan and Sight Engine still correctly classified the output as AI-generated. The AI or Not detector, got close, but still missed the mark.

Winston and WasItAI were flat-out wrong — classifying the ChatGPT image as real.

#2. “The Fake Receipt” (generated by ChatGPT)

How The Image Detectors Scored It

| Image Model | Category | TruthScan | Sight Engine | AI or Not | Winston AI | WasItAI |

|---|---|---|---|---|---|---|

| ChatGPT Full Generation | Fraud | 99.00% AI | 19.00% AI | 94.48% AI | 0.04% AI | 1.00% AI |

I wanted to generate a fake receipt with ChatGPT to see how realistic it would look. You might notice that the image lacks a legitimate address, but the texture still looks slightly realistic. I’d say this image is less compelling than the first I had ChatGPT generate, yet most of the detectors still tanked when analyzing it.

- Out of all the checks I ran on this image, TruthScan was the most accurate.

- Sight Engine really failed here, showing poor judgment on a document-related AI image.

- AI or Not performed noticeably better in this second analysis.

- Winston AI performed the worst, and WasItAI also completely failed.

Given that this image is associated with the fraud category, it’s concerning that most of the detectors misclassified it.

The prompt I used to generate the image

“Generate an image of a best buy receipt, that says the total amount spent is one billion dollars, and there’s a coffee stain on it“

Clearly, I wrote a quick and simple prompt. Still, the quality of the ChatGPT output is visually compelling to the naked eye. Not so compelling to two out of the five detectors I tested, it seems.

#3: “Hazard Package” (generated with Nano Banana)

Instead of just having Nano Banana generate an original image, I gave it one to alter.

The idea was to demonstrate how someone could easily use AI to create fake evidence and claim they received a damaged/hazardous package. First, I took a photo with an iPhone 15 of an empty Amazon package I found.

Here’s the photo I took:

Then, I uploaded the photo I took to Nano Banana and gave it the following prompt:

“I want you to edit this photo don’t change anything except for where the label is at add damage to it and add Black sludge Stains on the box that look real like grease stains“

Nano Bana output:

How The Image Detectors Scored It

| Image Model | Category | TruthScan | Sight Engine | AI or Not | Winston AI | WasItAI |

|---|---|---|---|---|---|---|

| Nano Banana Deepfake Edit | Fraud | 99.00% AI | 98.00% AI | 99.10% AI | 31.98% AI | 99.00% AI |

The oil-like sludge Nano Banana generated in the output had a convinving grimy sheen to it, complete with a soggy-soaking effect–not cartoony at all. Luckily, most of the detectors I ran it through correctly labled the image as a fake. The only outlier was Winston, which again performed poorly, misclassifying the image as authentic. AI or Not, TruthScan, and WasItAI were the most accurate detectors in spotting this image.

It’s concerning how simple it seems to upload an image to a chatbot and have it completely altered. I can imagine fraudsters and scammers using generative image tools to try making fraudulent return claims or engage in marketplace fraud.

If e-commerce platforms only require an image as proof to initiate a damaged package claim, then, without any reliable way to detect AI tampering, they’re exposed to a major attack vector. For example, Amazon’s official item return policy literally states, “Hazmat, including flammable liquids or gases, are not returnable.”

So if you actually received a package covered in oil or greasy black sludge, you wouldn’t have to return it, but could still get a refund. If, for some reason, that actually happened to you, Amazon would likely ask for photo proof. Do you see the problem AI-generated images can pose when they’re indistinguishable from real ones?

#4. “Cockroach Meal” (generated with Nano Banana)

This next AI image might make you lose your apetite. As it turns out, there are documented cases of people using AI to receive fraudulent refunds on food delivery apps. For this fourth test, I followed the same steps as in test three. This time, I snapped a pic of my empty takeout box after lunch.

Here’s the real image:

Then I put the real photo I took into Nano Banana and gave it the following prompt:

“Edit this image. Don’t change anything except add noodles into where the foil is at and add in a bunch of little tiny baby cockroaches in the food. Make it all look realistic and not cartoonish.”

The Nano Banana Output:

How The Image Detectors Scored It

| Image Model | Category | TruthScan | Sight Engine | AI or Not | Winston AI | WasItAI |

|---|---|---|---|---|---|---|

| Nano Banana Deepfake Edit | Fraud | 97.15% AI | 18.00% AI | 85.87% AI | 72.29% AI | 1.00% AI |

Once again, TruthScan confidently detected the AI generated media. Sight Engine and WasitAI completely failed. AI or Not was the second-most-accurate detector (with 85% AI certainty) when scanning this AI image, and Winston performed better than it did in the third test (at 72% AI certainty).

In this image, the noodles and cockroaches were all generated entirely by AI; yet two of the detectors didn’t seem to think so, and only one (TruthScan) was above 90% certain of AI tampering.

A point of concern here was just how simple it was to create this fake image. From uploading the photo I snapped, to prompting Nano Banana and receiving the output, in all, only took about 2 minutes. A nightmare for food delivery apps; a dream for hungry liars. A ‘fraud factor’.

DISCLOSURE: Some of the AI-generated images included in the following tests were intended to demonstrate how AI images can be used for disinformation. They contain controversial images and sensitive subjects, and are NOT intended to represent any particular political views or ideologies.

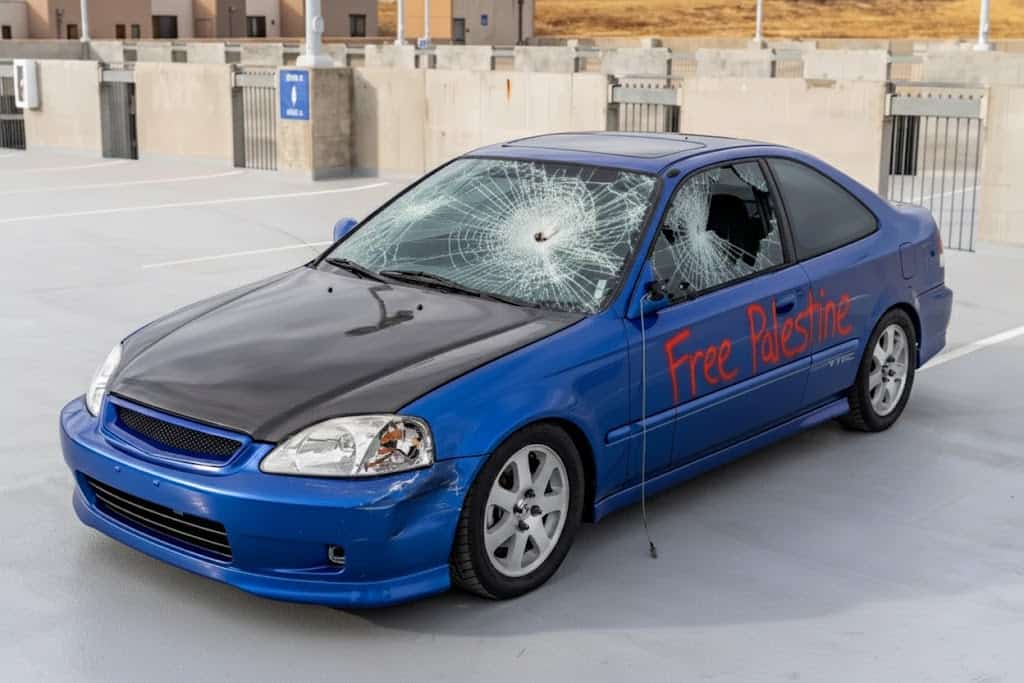

#5. “Autovandalism” (generated with Nano Banana)

I found a real image of a car for sale online. Pretty Mundane. But what if someone wanted to use this image to spread disinformation, or file a bogus auto insurance claim? Sadly, generative AI makes that quick and easy.

For this fifth test, I took a real image of a car, and gave Nano Banana the following prompt:

“Don’t change anything about the car except I want you to add spray paint to it that says ‘Free Palestine’ and the windshield is cracked. The headlights are smashed out and the mirror is broken and hanging down and the two windows on the side of the car are smashed.”

Here’s the real image:

Nano Banana AI image:

How The Image Detectors Scored It

| Image Model | Category | TruthScan | Sight Engine | AI or Not | Winston AI | WasItAI |

|---|---|---|---|---|---|---|

| Nano Banana Deepfake Edit | Fraud / Disinfo | 97.48% AI | 18.00% AI | 89.52% AI | 0.02% AI | 99.00% AI |

The detector results for this output were mixed. For this test, WasItAI (surprisingly) had the highest AI detection score (at 99%). TruthScan had the second-highest score (97%). Sight Engine significantly failed to detect any synthetic elements in the image, and Winston did the worst out of all the detectors (assigning a less than 1% chance of involvement). AI or Not did okay, but the accuracy was still below 90%.

Let’s talk about the image. The two most concerning aspects of AI-made or altered content like this, is that both involve deception. First, using AI to alter mundane images to create politically charged content is fast, easy, and looks real. Whether those making this type of content are just aiming for engagement or are political provocateurs, the problem is that it’s fake information being presented as authentic.

The second way using image generation tools like this can be abused is for fraud. If a scammer wants to file a fake auto-insurance claim, they will have to produce fake evidence. Instead of spending hours in Photoshop editing images and doctoring evidence, they can use image generators to quickly produce phony proof.

In the AI-image example above, notice how a scammer might use a similar process to file a fake claim according to Progressive’s Comphrensive Coverage Policy, which specifically covers:

- Slashed or damaged tires

- Broken windows, headlights, or taillights

- Spray paint damage

- Dents or scratches from someone keying your car

- Putting sugar or other substances into your gas tank

Of course submitting false evidence isn’t just deceptive, it’s also flat-out illegal. In an ideal world, nobody would break the law; in reality, crime happens every day. And without reliable detection, auto insurance companies risk being bilked for millions as AI-enabled fraudulent insurance claims increase.

#6. “Soda Musk and Don” (generated with Nano Banana)

How The Image Detectors Scored It

| Image Model | Category | TruthScan | Sight Engine | AI or Not | Winston AI | WasItAI |

|---|---|---|---|---|---|---|

| Nano Banana Full Generation | Disinfo | 99.00% AI | 99.00% AI | 99.00% AI | 0.24% AI | 86.00% AI |

Once again, I used Nano Banana for this test, and the goal was to demonstrate fake, politically charged images with a sillier undertone. TruthScan, Sight Engine, and AIorNot all flagged the generated image with a 99% AI detection rating. WasitAI wasn’t as confident —detecting the AI-generated image with only 86% certainty. And Winston completely failed, assigning an AI score of 0.2%. To generate the image, I gave Nano Banana this prompt:

“Generate a photo of Elon Musk spilling a large cup of soda like a Big Gulp on his shirt and freaking out while Donald Trump is sitting next to him laughing. They are on a jet plane. That is the scene. And the photo should look realistic like it was taken on a snapshot camera and include artifacts like the camera flash reflecting on the airplane window and outside the airplane. It’s nighttime. It should look hyper-realistic.“

Whenever one of these software programs fails to detect AI, they label it as authentic. Silly as this image example from the test may be, there’s nothing funny about thinking a deepfake is real.

#7. “Still Alive” (generated by Nano Banana)

How The Image Detectors Scored It

| Image Model | Category | TruthScan | Sight Engine | AI or Not | Winston AI | WasItAI |

|---|---|---|---|---|---|---|

| Nano Banana Full Generation | Deepfake | 97.49% AI | 95.00% AI | 99.27% AI | 0.15% AI | 1.00% AI |

Do you find this image disturbing? To generate this deepfake, I used Nano Banana and gave it a picture of myself. Next, I gave it a long prompt intended to put me in a situation that implied I’d been abducted. Maybe it’s because this photo shows me in a compromising position. What if someone made something like this, sent it to my mother, and threatened to hurt me unless she sent them money? Scary stuff.

(The full prompt I used for this can be found in the full testing dataset at the end of this article.)

Luckily for this image, despite it having a very realistic look, three in five of the detectors identified it as AI-generated.

AI or Not came in with a strong 99% AI score, TruthScan with 97%, and Sight Engine at 95%. Unfortunately, Winston and WasItAI classified the image as real.

I should clarify that these tests were not done on my personal Gemini account. Nano Banana never asked me to prove I was the person in the image. Anyone could have downloaded a photo of me from the internet, put it into Nano Banana, and had it create this type of image.

#8. “Abuse of a Ben” (generated with Nano Banana)

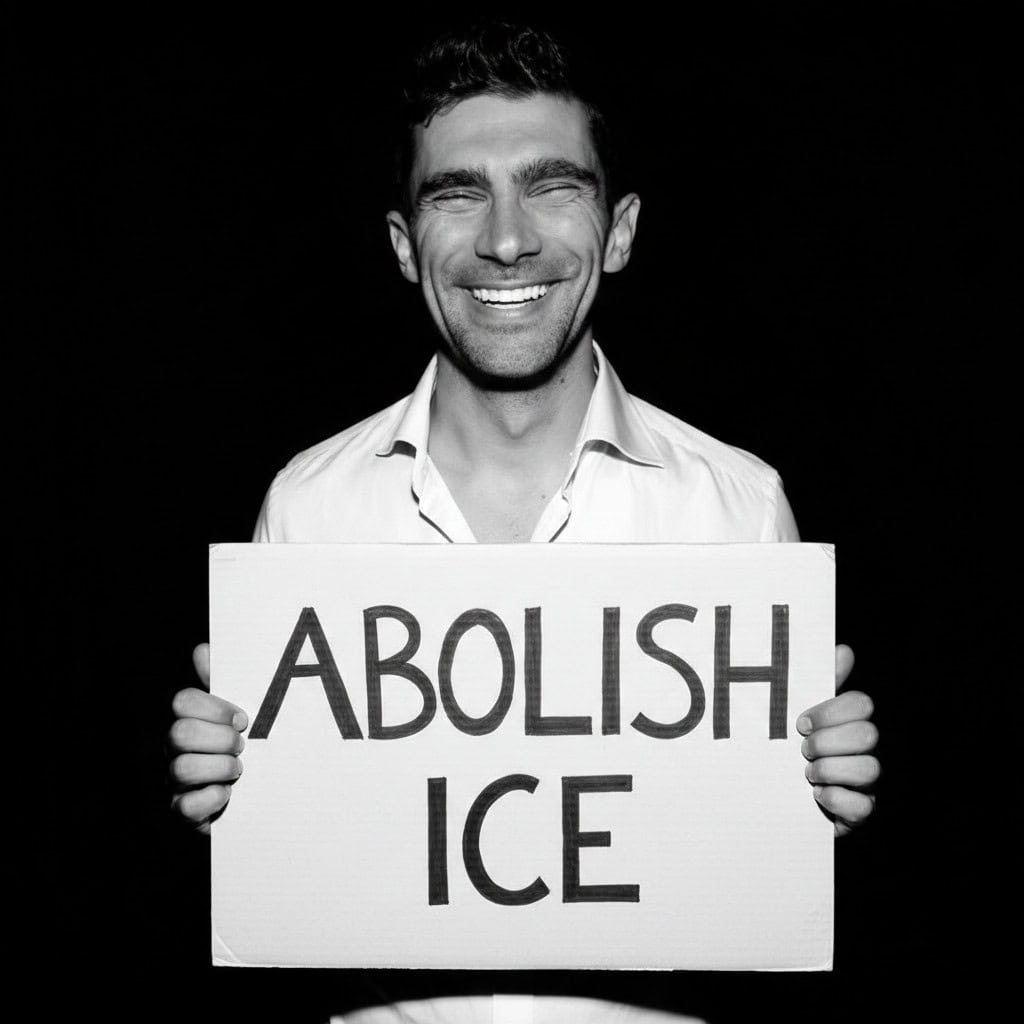

Meet Ben. He spends most of his time managing various departments at the companies he works at. The image above is Ben’s (real) dapper corporate headshot. If you can’t tell, Ben’s a professional guy. Whatever political opinions he has, he keeps to himself, and his work conversations are just about work. But at any given time, Ben’s likeness could be used to spread political propaganda or messages without his consent.

There’s been a lot of reporting recently about how AI-generated videos involving ICE agents and protests have been spreading online. Based on all the tests so far, I concluded that creating fake political propaganda with AI was really easy. I decided to test again, this time using Ben.

With Ben’s permission, I used his headshot to create a deepfake. Here’s the AI-generated image that Nano Banana created:

How The Image Detectors Scored It

| Image Model | Category | TruthScan | Sight Engine | AI or Not | Winston AI | WasItAI |

|---|---|---|---|---|---|---|

| Nano Banana | Deepfake / Disinfo | 99.00% AI | 97.00% AI | 98.88% AI | 5.74% AI | 99.00% AI |

It took thirty seconds. All I had to do was drag and drop his headshot into Gemini and prompted it to “hold up a sign that says abolish ICE.” The output from Nano Banana isn’t as photorealistic as the others, but it still looks authentic enough to be persuasive.

Fortunately, four of the detectors accurately identified the image: TruthScan (99% AI), Was It AI (99% AI), Sight Engine (97% AI), and AI or Not (97% AI). Winston AI failed, assigning only a 5% AI score.

#9. “Club Void” (generated by Midjourney)

How The Image Detectors Scored It

| Image Model | Category | TruthScan | Sight Engine | AI or Not | Winston AI | WasItAI |

|---|---|---|---|---|---|---|

| MidJourney Full Generation | General | 99.00% AI | 99.00% AI | 89.21% AI | 1.56% AI | 70.00% AI |

I generated the image above using Midjourney. The more I looked at it, the more unsettling it felt. The aesthetic is dark and eerie. A shadowy presence seems to be emerging from behind the subject. To create this image, I used Midjourney’s aesthetic reference feature and simply prompted it to be a “girl standing in a club smiling. I showed the image to a few people, and they all thought it was real.

Most detectors flagged the image as AI-generated. TruthScan and Sight Engine flagged it with a 99% AI score, AI or Not said 89%.

WasItAI wasn’t as sure as the detectors, classifying the image with only a 70% AI score.

Winston AI’s detector utterly failed, giving the image a 1.56% AI score.

#10 “Ice Box” (generated by Midjourney)

How The Image Detectors Scored It

| Image Model | Category | TruthScan | Sight Engine | AI or Not | Winston AI | WasItAI |

|---|---|---|---|---|---|---|

| MidJourney Full Generation | Disinfo | 99.00% AI | 92.00% AI | 97.94% AI | 41.20% AI | 72.00% AI |

I created this image using the same mid-journey method from the previous test. This time, the goal was to generate something a bit darker. TruthScan was the most accurate detector, labeling the image as 99% AI. Sight Engine and AI or Not both correctly detected the image. Winston AI completely failed, and WasItAI had a much lower confidence score than TruthScan, Sight Engine, and AI or Not.

I should say that in this instance, the image I generated could be used or framed in two ways. On one hand, someone could use this (or any political/civil unrest themed) type of media in some sort of art or creative project to make a statement. In such a case, the risk seems lower. The main concern I have with this category of AI-generated media, is it being used by nefarious individuals who claim that it’s real.

Recap and results: Best AI image detectors

Okay, so now that we have displayed all of the tests, i’ll recap: I generated 10 AI images

- I used ChatGPT, Nano Banana, and Midjourney to generate 10 AI images

- I tested five AI image detectors by putting all of the AI images I generated through them.

- TruthScan passed all of the tests, and was the most accurate detector. AI or Not passed 8 out of 10 tests, showing some reliability. Sight Engine failed 3 out of 10 tests, and demonstrated allround questionable accuracy. Was It AI failed 4 out of 10 tests, and had poor accuracy all round. Winston AI was the least accurate AI image detector, only passing 3 out of 10 tests, consistently misclassifying images.

Comprehensive Test Results

How five popular AI-image detectors performed across fraud, disinformation, deepfakes, and general photography.

All 10 Tests — Full Breakdown

Each score represents the detector’s AI-confidence rating. Threshold for a pass: ≥ 90% AI.

| Test | Category | Source | TruthScan | Sight Engine | AI or Not | Winston AI | WasItAI |

|---|---|---|---|---|---|---|---|

| 1 Man On a Ledge | General | ChatGPT | 99.00% | 99.00% | 78.00% | 0.98% | 1.00% |

| 2 The Fake Receipt | Fraud | ChatGPT | 99.00% | 19.00% | 94.48% | 0.04% | 1.00% |

| 3 Hazard Package | Fraud | Nano Banana | 99.00% | 98.00% | 99.10% | 31.98% | 99.00% |

| 4 Cockroach Meal | Fraud | Nano Banana | 97.15% | 18.00% | 85.87% | 72.29% | 1.00% |

| 5 Autovandalism | Fraud / Disinfo | Nano Banana | 97.48% | 18.00% | 89.52% | 0.02% | 99.00% |

| 6 Soda Musk & Don | Disinfo | Nano Banana | 99.00% | 99.00% | 99.00% | 0.24% | 86.00% |

| 7 Still Alive | Deepfake | Nano Banana | 97.49% | 95.00% | 99.27% | 0.15% | 1.00% |

| 8 Abuse of a Ben | Deepfake / Disinfo | Nano Banana | 99.00% | 97.00% | 98.88% | 5.74% | 99.00% |

| 9 Club Void | General | MidJourney | 99.00% | 99.00% | 89.21% | 1.56% | 70.00% |

| 10 The Ice Box | Disinfo | MidJourney | 99.00% | 92.00% | 97.94% | 41.20% | 72.00% |

Methodology: Each image was submitted once to every detector. A score of ≥ 90% AI is counted as a correct detection. Scores between 70–89% are “near-misses.” Anything below 70% is a fail. AI or Not labeled one generation at 78%, one at 85%, and one at 89% — the 89% is treated as a near-miss with an asterisk in the summary table.

Closing Remarks and Data

In total, between the writing of this article and the rigorous testing, I spent 25 hours putting this report together. Multimodal AI detection tools are still developing, but it’s clear that some are more accurate than others. After testing all the tools, TruthScan has the most accurate AI image detector. The tests speak for themselves.

If you’d like to access a CSV copy of the data from the testing I did in this article, you can find it here. The data spreadsheet I linked contains all of the original prompts and detection results from the tests in this article.