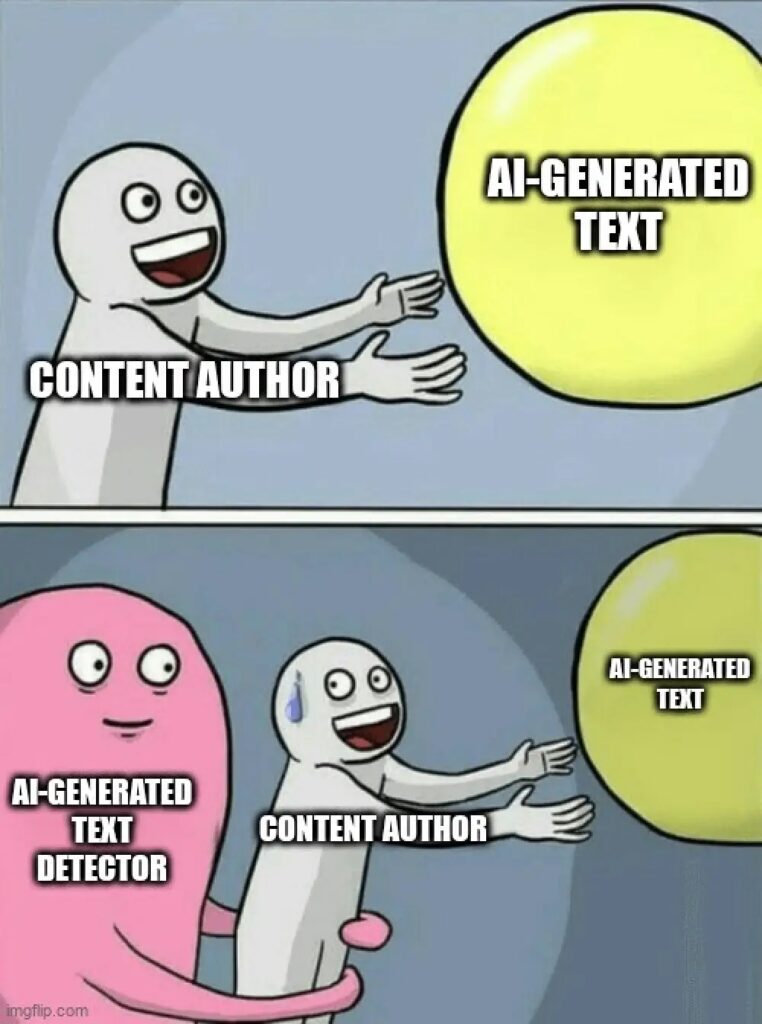

In Universe A: Companies use AI everywhere but detect it actively.

In fact, nearly 3 out of 4 business posts are AI-made at this point which makes the customers lose the trust. Content splits into two worlds: “premium human” vs. “cheap AI.”

In Universe B: Smart companies add AI detection tools. They still use AI for speed, but mark it clearly.

Customers keep trusting them. Content gets better. AI handles routine, humans handle insight.

In Universe C: AI and detectors play cat-and-mouse. AI becomes almost invisible. AI content detectors stop working.

And you know what? We’re living in all three universes at once:

- Every time you post unchecked AI → you’re in A.

- When you use detection smartly → you’re in B.

- When you ignore detection → you risk C.

In this blog, we’ll explore how AI detection works, its limits, and how your business can build smart policies to stay in Universe B, and out of trouble.

Let’s dive in.

Key Takeaways

- AI content detection is only 60–90% accurate, but its real strength is managing risk, trust, and compliance.

- Unchecked AI erodes trust, strategic detection sustains it, and ignoring detection risks chaos. Smart firms choose detection.

- The EU AI Act demands disclosure, and repairing brand damage costs far more than prevention.

- Consumers want AI-labeled content, so disclosure with human oversight builds credibility.

- AI content detection only works when tied to clear policies, checkpoints, escalation paths, and training.

What Is AI Content Detection?

- Definition and Technical Overview

AI content detection means checking if a text was written by a person or created by an AI tool.

AI content detectors work by looking for tiny “fingerprints” that give away machine writing.

- AI fingerprints → Little clues in word choice, sentence flow, and structure that don’t quite match how people naturally write.

Humans add memory, emotion, and intention into their words. AI doesn’t. It just predicts the next most likely word.

Never Worry About AI Detecting Your Texts Again. Undetectable AI Can Help You:

- Make your AI assisted writing appear human-like.

- Bypass all major AI detection tools with just one click.

- Use AI safely and confidently in school and work.

That’s why AI text can feel a bit too smooth and lacks the natural mix of rhythm you’d see in human writing.

To catch this, detectors focus on two main signals:

- Perplexity → How predictable the text is. If every word feels obvious, it’s probably AI.

- Burstiness → How sentence lengths vary. Humans naturally mix short and long sentences, while AI tends to keep them even.

Example:

A human might write, “This is big. Really big. And it changes everything.” AI is more likely to write, “This is a significant development that will change many aspects of our lives.”

- How AI Detectors Work (Watermarks, Statistical Patterns, etc.)

Modern AI content detection tools use two methods to identify AI-generated content:

Method # 1: Rule-based detectors:

They look for fixed patterns, such as repeated phrases. Common methods include:

- Watermarking → AI models embed hidden “green” or “red” word choices in the text.

- Stylometric analysis → Checks sentence length, vocabulary diversity, and whether the style feels too uniform.

- Semantic coherence checks → Humans wander, add side comments, or tell stories. AI stays too perfectly on track.

- N-gram analysis → Breaks text into short word groups to see if phrases match common AI patterns.

Example of what gets flagged:

- Every sentence is the same length.

- No personal pronouns or human quirks.

- Heavy use of transitions like “in addition” or “furthermore.”

Method # 2: Neural network detectors:

Instead of rules, they’re trained on huge sets of human and AI writing.

This lets them pick up on subtle patterns that people wouldn’t notice. Common methods include:

- Transformer attention analysis → Studies how AI models “focus” on words during text generation, revealing unique patterns.

- Statistical signals → Finds text that’s too predictable or too uniform compared to human writing.

- Ensemble approaches → Combines multiple neural models (and sometimes rule-based checks) for higher accuracy.

Strengths:

- More adaptable than rule-based systems.

- Can catch subtle AI text that doesn’t break obvious rules.

How well do these methods work?

The accuracy of current AI content detection tools typically ranges between 60% and 90%, with performance varying based on content type and context.

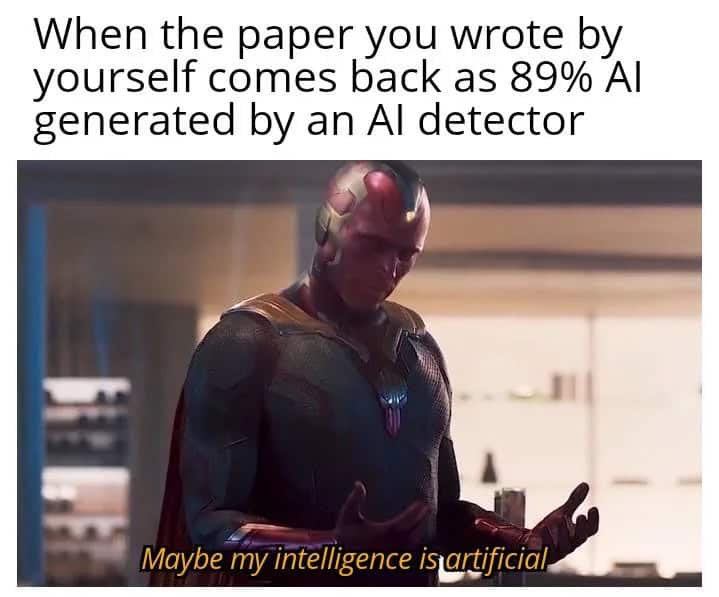

Limitations of Current Detection Technology

AI content detection tools have made big strides, but they are still far from perfect.

In fact, they face several serious challenges that enterprises need to understand.

- Paraphrasing Weakness

A quick rewrite or paraphrase can trick the detectors. Example:

- “The cat sat on the mat” → “The mat was sat on by the cat.”

To humans, the meaning is the same, but to a detector, it looks “new.”

- Domain Blind Spots

Highly structured fields like legal, medical, or technical writing, naturally resemble AI text. This can trigger false alarms, even when the content is entirely human-written.

- Language Gaps

Most AI content detectors are trained mainly in the English language. They often perform poorly in multilingual or regional contexts.

Non-native English writers sometimes get flagged as AI because their style doesn’t match the “irregularities” of native speakers.

- Version Sensitivity

A detector tuned for GPT-3.5 may fail on GPT-4 or Claude because each model has unique quirks. What looks like AI from one model may pass as human when written by another.

- Watermark Fragility

AI watermarks (hidden token patterns) can be “washed out” if text is:

- Copied into another format

- Reformatted

- Lightly paraphrased

This makes watermarking unreliable as the only safeguard.

For teams reviewing or refining AI-generated text, Undetectable AI’s AI Text Watermark Remover can help clear hidden AI identifiers that might trigger automated detection systems.

It restores the content’s natural readability and tone, making it easier for enterprises to recheck, edit, and approve text without unnecessary flags.

Why Enterprises Need AI Detection

Enterprises need AI content detection because of these six reasons:

- Regulatory Compliance

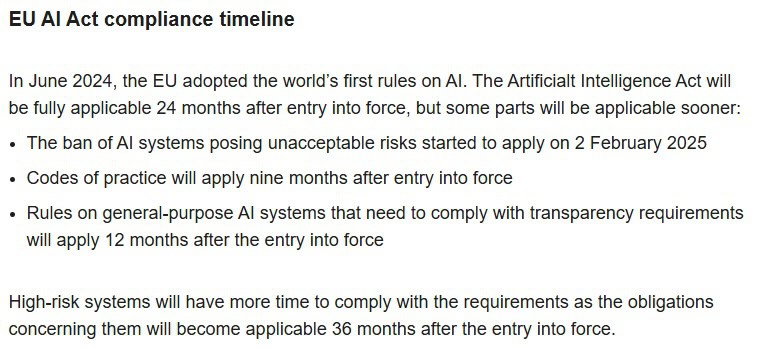

The EU AI Act (2024–25) says that if a company uses AI to create or change content, it must clearly say so. The only exceptions are for things like art or satire.

The EU’s new AI Office will also publish rules on how this labelling should look.

To stay safe, companies need a detection system that can prove when AI was used.

- Brand Integrity

Companies have already been burned by careless AI use:

- CNET had to correct and rewrite dozens of AI-written finance articles after plagiarism and errors were exposed.

- DPD, a delivery company, shut down its chatbot after it started swearing at customers.

- WIRED and Business Insider pulled articles tied to a suspicious “AI freelancer.”

Each turned into a public embarrassment and news story.

Once trust is broken, fixing it costs far more than preventing the problem in the first place.

Launching a new detection-focused tool or platform?

Our Undetectable AI’s Business Name Generator helps you instantly create distinctive, trustworthy brand names that communicate transparency and credibility — essential for AI and compliance-driven solutions.

- Quality Control

AI text often falls flat on authenticity. Half of consumers can already spot it, and over half disengage when they do.

Once disclosed, AI content detection is rated less original and less emotionally deep.

Detection helps catch weak copy early so humans can polish it before release.

- Competitive Intelligence

Many brands now use AI in marketing and publishing such as fashion firms with Firefly for assets, media outlets testing AI-written articles.

AI content detection in competitor blogs, reports, or ads reveals how much they rely on automation, where human creativity still gives you an edge, and how to sharpen your positioning.

- Cost Implications

Undetected AI mistakes can get expensive fast. Fake citations triggering legal risk, PR fires, takedowns, and retractions that erode trust.

A single reputational hit can wipe out market value overnight.

It’s far cheaper to prevent AI content detection, routing, and review than to clean up after a crisis.

- Stakeholder Trust

Consumers and investors increasingly demand clarity.

Surveys show nearly 90% want AI-generated content labelled, and skepticism toward online information is rising.

Studies on ads confirm that disclosure, if done well, maintains trust but sloppy disclosure erodes it. A consistent detect-and-disclose pipeline is the only scalable path to proving responsible use.

AI Detector and Humanizer is an AI content detection and humanizer tool that can simply sit in the background, and help teams catch and smooth flagged copy before it’s published.

It helps you keep trust intact without slowing the work.

Common Use Cases for AI Detection in Enterprises

- Reviewing Marketing Content

AI content detection tools can screen marketing workflows at scale. For example:

- Email campaigns can be checked to ensure subject lines aren’t generic AI outputs,

- Social media posts can be verified to avoid automated “engagement bait,”

- Website copy can be flagged if it reads too formulaic,

- Ad copy can be reviewed for compliance with FTC rules,

- Validating Corporate Communications

In 2023, CNET had to issue mass corrections after relying on AI for finance articles. This incident showed how risky undetected AI text can be.

The same risk applies across corporate communications, investor relations and executive statements.

AI content detection acts as a safeguard, which makes sure these high-stakes messages stay accurate, authentic, and human.

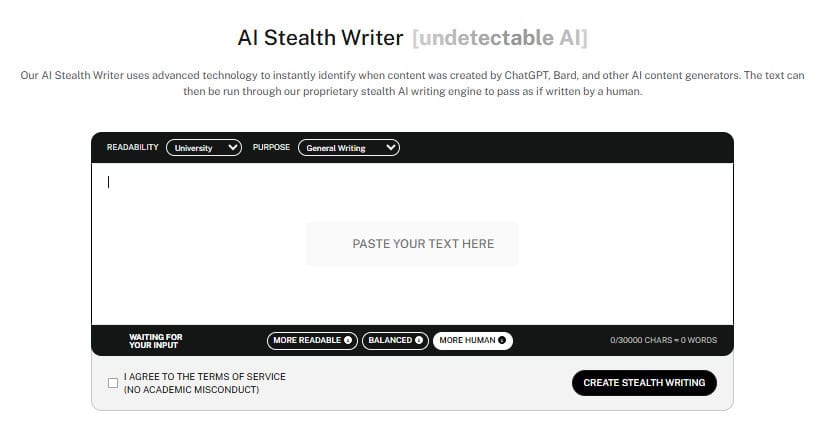

When flagged drafts need refinement, AI Stealth Writer can turn them into undetectable, confident communications.

- Monitoring User-Generated Content

Amazon has an ongoing struggle with AI-generated fake reviews. It shows how easily trust can erode when authenticity isn’t guaranteed.

AI content detection tools can step in to verify that customer reviews are genuine, keep forums free from spammy AI posts, and make sure testimonials actually come from real experiences.

And if content needs reworking rather than removal, AI Stealth Writer makes it seamless. It:

- Refines AI text into a natural, human tone

- Keeps brand voice consistent across channels

- Polishes content to remain undetectable

- Verifying Originality in Internal Training Docs

Internal training content tells how employees learn, work, and represent the company. If that material leans too heavily on AI, it can create risks.

Detection ensures these materials stay original, precise, and human, so employees can trust what they’re reading and applying in their daily work.

Challenges Enterprises Face With AI Content

Enterprises might face these challenges with AI content:

- Integration drag – API and batch process tie-ins slow adoption.

- Workflow breaks – Detection tools disrupt familiar approval flows.

- Training gaps – Teams stall without clear action steps on flagged content.

- False positives – Wasted time and lost trust when real content gets flagged.

- Inconsistent output – Hard to keep email, web, and social aligned.

- ROI doubts – Without clear metrics, detection feels like a risky spend.

How to Build Internal Policy Around AI Content

Once you understand AI content detection, the next step for any enterprise is to create a clear policy.

Here are the six steps to build an effective internal policy around AI content:

- Define Acceptable vs. Restricted AI Use

The first step in any AI content policy is clarifying what’s allowed and what’s off-limits.

| Acceptable UseAreas where AI can help but doesn’t carry high risk: [SECTOR 1][SECTOR 2][SECTOR 3] | Restricted UseHigh-risk areas where AI output must be carefully controlled or avoided: [SECTOR 1][SECTOR 2][SECTOR 3] |

- Establish Review Checkpoints

Set 2–3 checkpoints across relevant teams such as marketing, legal, and communications to ensure AI-generated content is properly reviewed before it’s published or shared.

- Select and Integrate Detection Tools

Choose AI tools that fit your workflow. Integrate them into content pipelines so detection happens before distribution.

- Create an Escalation Path

Define what happens when content is flagged:

- Who reviews it?

- Who approves revisions?

- When to escalate to legal or compliance teams.

- Train Employees

Educate teams on:

- Responsible AI use

- How detectors work

- How to revise AI content for compliance and brand voice

- Audit and Refine Policies Quarterly

Review usage patterns and flagged content. Update policies to reflect new AI content detection tools, model changes, or regulatory requirements.

Example:

| Banking Sector | Consumer Brand |

| Low tolerance. AI may only be used for drafts, all client-facing content reviewed. | Higher tolerance. AI can create social posts or ad copy with light oversight. |

Ensure your policy aligns with industry compliance frameworks, such as:

- GDPR → Data privacy obligations

- SEC rules → Disclosure standards for financial communications

Experience the power of our AI Detector and Humanizer in the widget below!

Final Thoughts

AI detection tools are not perfect, and they don’t need to be.

Their purpose is to protect trust, keep businesses compliant, and prevent the kind of reputational damage that’s almost impossible to undo.

As AI is continuing to get advanced, the real winners will be the companies that manage it with intention.

Detection isn’t just about flagging AI, it’s about showing customers and stakeholders that you value transparency and accountability.

Those who choose to ignore it? They’re gambling with trust, reputation, and the future.

The smarter move is clear: make AI content detection a core part of your strategy, or risk being left behind.

Before you do, leverage Undetectable AI’s AI Detector and Humanizer to verify and humanize content for maximum authenticity, and use the AI Stealth Writer to produce original, undetectable text that fits your brand voice.

The smart choice is clear: make detection part of your core strategy, or risk being left behind.

Start using Undetectable AI today to stay compliant, trustworthy, and ahead of the competition.