Robots are taking over the world! Now, this is a statement that in years past was considered a bad thing – but now, it might not actually be all that bad.

There’s been some discourse surrounding how robots, particularly artificial intelligence, have changed our daily lives and various industries.

Whether it’s simplifying the way we get our chores done or automating routine tasks in the office, AI has definitely segmented itself as a frontrunner in innovation.

One of the biggest contributions of AI is content creation.

Chatbots are built with natural language processing (NLP) models to answer queries and simulate human-like responses.

While chatbots can help out with mundane queries, you might want to check for chatbot-written content to make sure that what you read is credible and authentic for its intended purpose, like academic research or journalism.

We’ve got you covered.

Here are the best ways to know how to check if something was written by a chatbot.

The Quick Solution: Use AI Detectors to Identify Chatbot-Written Content

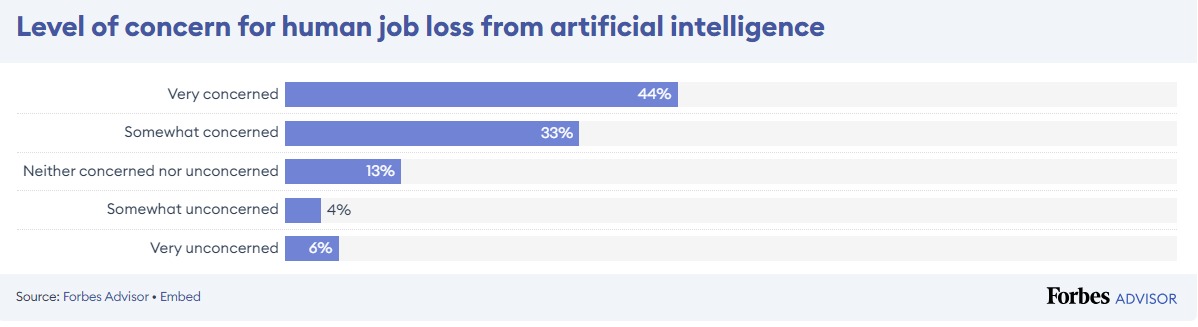

AI is expected to create nearly 100 million jobs, making tasks easier while allowing aspiring workers to explore new areas.

AI-powered content creation tools, for instance, can produce AI-generated text to make the process faster.

But with this comes a concern about authenticity. Where does this apply?

Never Worry About AI Detecting Your Texts Again. Undetectable AI Can Help You:

- Make your AI assisted writing appear human-like.

- Bypass all major AI detection tools with just one click.

- Use AI safely and confidently in school and work.

Think of submitting a research paper to class. A student submits one, and the teacher notices how the text seems off.

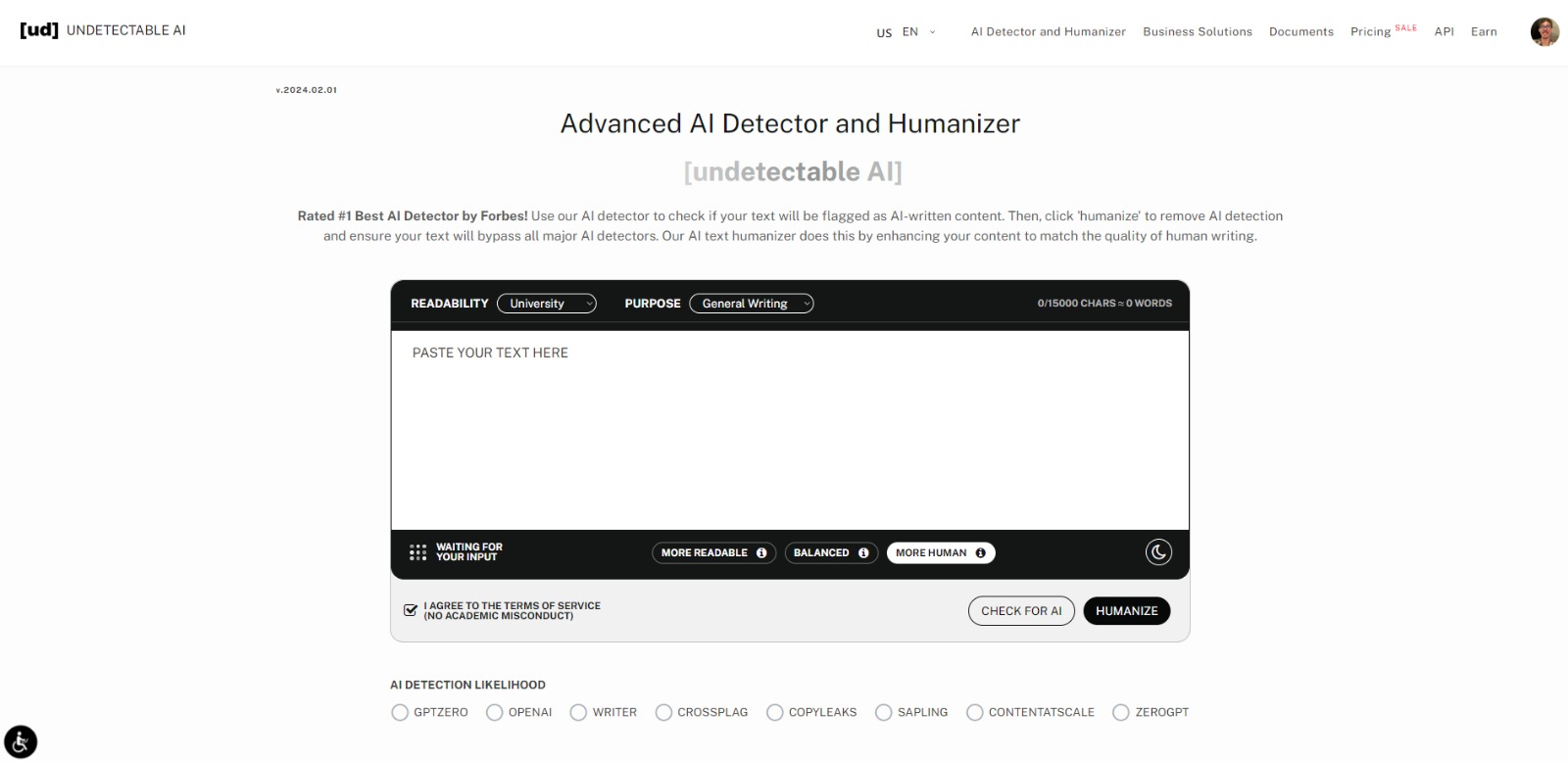

To verify, the teacher could use a trusted AI detector like Undetectable AI to do a quick and effective analysis of the student’s work.

Thanks to the AI detector, the teacher can make an informed decision regarding the authenticity of the papers their students submit and take the appropriate action when necessary.

AI detectors analyze language and structural patterns that can only be generated by AI.

Chatbot-written content has specific characteristics that make it stand out to the detector, which reviews content in more detail than what the naked eye can see for accuracy.

Content creators can also use AI detectors to verify the originality of their work and trace any possible instance of unintentional plagiarism.

So while AI is definitely valuable for generating ideas and inspiration for writing, it’s important to make sure that AI use stays ethical and transparent.

As more and more institutions now allow the adoption of AI, you can also use Undetectable AI to humanize the ideas you get just to be sure that they match the quality of human writing.

It’s a healthy mix of using AI to ease the content creation process while also checking that content stays original and is still uniquely made by you.

Ready to see the difference? Test the Undetectable AI widget below and experience firsthand how it can enhance your writing (English only).

Just input your text and watch as it transforms into a more humanized and polished version. Give it a try now!

Other Indicators That Help You Identify Chatbot-Written Content

AI has become so good that sometimes it blurs the line between human and AI-generated content.

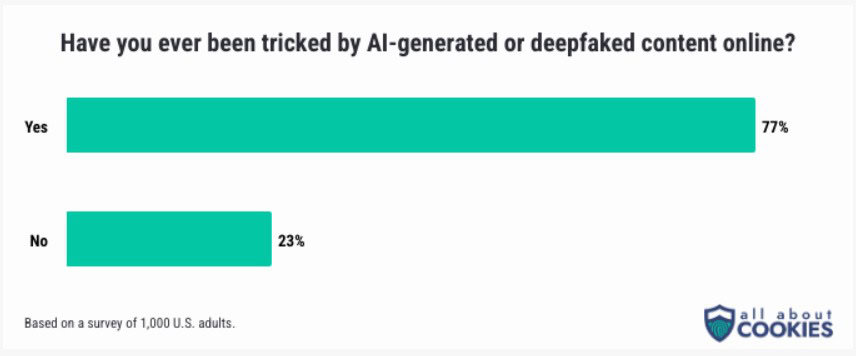

More than seven out of 10 individuals admit to being deceived by AI content in some shape or form. As AI evolves and becomes more sophisticated, there should be clearer policies and ethical guidelines set for its proper use.

Source = All About Cookies

While AI detectors work well for identifying chatbot-written content, some specific characteristics can help you manually identify whether it’s AI or not.

1. Unnatural Language

Unnatural language is one of the biggest telltale signs of how to know if something was written by AI.

What we mean by this is the text is very robotic and usually overly formal. There’s also a lack of natural flow.

Some major signs of unnatural language include:

- Excessive use of complex words and technical jargon when it’s unnecessary.

- Lack of casual expressions and creative writing.

- The text can’t maintain a consistent tone and feels patched together.

An example here is when a student decides to completely rely on ChatGPT to create their college admission essay.

They type in a prompt, copy, paste, and press send. It’s easy to detect their work as AI because of how overly sophisticated the language is.

The comprehension level is way beyond a typical student, easily raising suspicions of AI-generated content.

AI tends to have unnatural language because of the AI models’ limitations. These models simply replicate human speech patterns and try to create their own text based on what is prompted.

2. Contextual Understanding

Here’s something frustratingly relatable: Ask a customer service chatbot about a specific issue with their product.

Instead of answering your query directly, the chatbot responds with some generic information like the company’s policies and steps to use the product.

You will not be able to get more out of the chatbot because this is all it was programmed to do. Disappointingly, you decided to reach out to human support, which added to the wait time.

This is what a lack of contextual understanding is like. Contextual understanding, as the name implies, requires the ability to interpret information within the relevant circumstances.

Human written work has this because we do our research on a topic when we write something, providing specific and relevant information.

AI content can often feel disjointed and even nonsensical at times. Because it just taps various resources on a surface level, AI struggles to create content that can accurately interpret the nuances of language.

3. Knowledge Limitations

While AI is powerful, it’s not an all-knowing being. AI is not immune to constraints, especially when it comes to more complex knowledge.

But because AI is intended to fulfill what it is prompted to do, it will still fill in information that is untrue and even non-existent. And even when AI can provide correct data, it’s usually outdated.

Here are common reasons why AI has knowledge limitations:

- There’s data bias, meaning AI can only provide more detailed information on well-represented perspectives.

- While AI models are composed of huge datasets, the scope of their knowledge is not infinite and has to stop somewhere.

- AI usually struggles to keep up with trends and lacks the latest information.

Solely relying on AI content can be alarming because it can spread misinformation.

For example, an AI-generated article on advanced medical procedures is most likely to have inaccuracies, which can be harmful for research purposes.

4. Writing Style Inconsistencies

Have you read an article online where the introduction looked really engaging, but then the following sections fizzled out and felt like a completely different writer made it?

That article you read might just be AI-generated. AI content is infamous for being inconsistent with its writing style.

It’s true that AI models are great at mimicking human language. But they severely lack the ability to stay consistent in distinct writing styles.

Here’s what inconsistencies in writing style usually look like with AI:

- The tone quickly shifts from formal to informal or professional to overly casual within the same piece of content.

- AI-written work can’t provide a coherent flow in the writing, looking quite staggered.

- Readability is hard because there are too many (or a lack of) perspectives.

Being aware of the inconsistencies is important to be sure if the content you’re reading is credible or not.

5. Error Patterns

To err is human (and machine). Even the smartest robots we have today make mistakes.

Error patterns are a common red flag for AI-generated content because the AI model itself doesn’t always work properly or as intended, and the organization doesn’t do a quality check to verify the text and information provided by the tool.

In short, AI was used to automate the content generation process, and while not bad, it can go wrong if left unchecked.

The mistakes can range from grammatical errors to spelling mistakes, contradicting statements, and even ideas that don’t make much logical sense.

Why does this happen? AI is just software with complex algorithms, which opens the possibilities for bugs and glitches.

The quality of data used to train the AI models can also influence the tool’s performance.

6. Verification of Facts or Personal Details

Another thing that AI doesn’t do well is verifying the information it provides. Misinformation from AI is a concern for over 75% of consumers.

The inability of AI to verify facts or personal details can be dangerous. Misinformation, in general, can lead to misunderstandings and can even affect individuals and organizations.

These are a few ways to avoid getting misinformed by AI:

- We humans are great cross-checkers, so always check the sources provided in the content you read.

- Exercise some critical thinking skills and avoid believing everything at first glance.

- Especially when it comes to high-stakes content, make sure there’s human oversight to see that the content is accurate.

- Take the time to learn the intricacies of AI and understand its capabilities and limitations.

AI has a hard time knowing accurate data because it only relies on the information given to it. This means that any new information is not at all available.

AI also doesn’t do cross-checking across multiple sources or has the capacity to validate the authenticity of information as good as humans do.

Detect AI-generated content and humanize it effortlessly—get started below.

Conclusion

With these indicators, you can be more confident about the content you read.

AI is only going to get better, so it’s important to keep a sharp eye on how to check if something was written by a chatbot, because the rules will change and we’ll have to adapt.

We consume so much content online that it can easily feel tiring to be this attentive to whatever we see. To simplify the process, have Undetectable AI alongside you.

Undetectable AI makes AI detection easy and efficient, and we can even enhance the readability of the content you generate with our AI humanizer.

So you can be at ease with the content that you yourself produce. Two tools on one platform.

As we embrace the benefits of AI technology, let’s prioritize its ethical use and combat misinformation to maintain the integrity of communication.